Hey {{first_name|Conscious Church Fam}}

Nano Banana is still blowing my mind (have any of you been playing with it yet?)

And from paralysed patients moving robots with their thoughts, to Google dodging a Chrome break-up, and Apple leaning on Google Gemini to prop up Siri. Here is a recap of the news this week

In today's recap:

UCLA unveils a non-invasive BCI that lets paralysed people control robots

Google avoids losing Chrome in an antitrust ruling (but must change tactics)

Apple partners with Google Gemini to boost Siri’s search

Let’s dive in 👇

💭 Josh’s Musings

Josh’s Musings

I just tried something out and it actually worked. Nano Banana (an AI editing tool) handled my thumbnail text editing (for the email thumbnail) without me even opening my usual Adobe file. It matched the font perfectly and didn’t leave any of those weird gaps etc.

Pretty helpful especially with accurate text.

I spent some time one night this week experimenting with a specific workflow: generating a base image, then taking that over to Nano Banana to create different scenes while keeping the same character. The consistency it maintains while interpreting new scenarios is remarkable.

It used to require you to train a specific model on multiple images just to get something decent. Now it spits out things in seconds.

After just under an hour of playing around, I had generated the initial images, motion elements, and jacket design you see here. (I spent additional time on other elements and the voiceover, but the core creative work happened fast.)

Look, I’m not a video editor or director of photography. But with tools like this, I was actually able to pull an idea from my head and flesh it out into something real. It’s not groundbreaking cinema, but it’s exactly what I envisioned.

I’m curious - have any of you experimented with Nano Banana or similar creative AI tools? What kind of results are you getting, and what workflows are you discovering?

🙌 Stay Curious, Stay Conscious, Stay Wild

Josh

LATEST NEWS

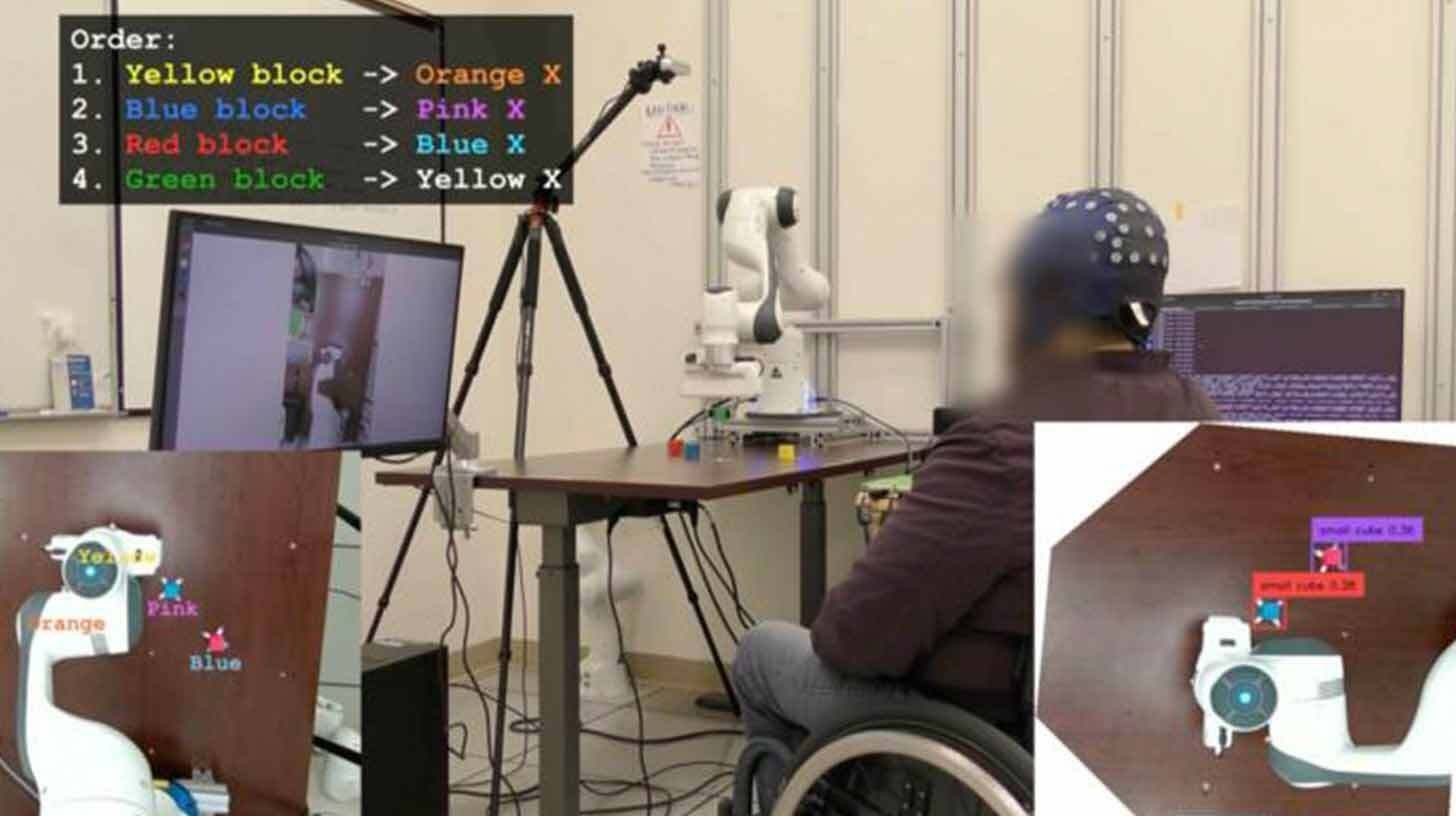

Image Source: UCLA

Recap:

UCLA engineers have developed a wearable brain–computer interface that uses AI to interpret EEG signals, enabling paralysed users to move robotic arms and cursors, no surgery required.

The Details:

A custom EEG decoder plus camera-based AI interprets movement intent in real time

Tested with four users, including one paralysed participant who completed robotic tasks in 6.5 minutes (previously impossible)

Participants completed tasks nearly four times faster with AI support

Standard EEG caps replace invasive implants, removing surgical risk

Conscious Take:

This is massive. For decades, BCIs have been mostly invasive, experimental, or clunky. Now we’re seeing AI close the gap where brain signals are weak. It’s a glimpse of restoration, people regaining abilities thought lost. Of course, it also raises ethical questions about how far we merge mind and machine. But how incredible right?!

Image Source: Nano Banana | The Conscious Church

Recap:

A federal judge ruled Google won’t be forced to sell Chrome or Android, despite accusations of monopoly power. However, the company must loosen its exclusive search deals.

The Details:

Judge Amit Mehta noted that “the emergence of GenAI changed the course of this case” — AI rivals like ChatGPT are now threats to search

The DOJ’s push for asset sales was rejected as “overreach”

Google can still pay Apple and others for placement, but not exclusively

Perplexity had even floated a $34.5bn offer for Chrome if it were up for grabs

Conscious Take:

It’s wild that GenAI is now reshaping antitrust law. The judge literally pointed to ChatGPT as the reason Google isn’t considered untouchable anymore. Rivals sniffed opportunity, but for now Chrome stays under Google’s roof. Perhaps this will free Google to push harder into Gemini-powered browsers.

Image Source: Nano Banana | The Conscious Church

Recap:

Bloomberg reports Apple has struck a deal with Google to test Gemini models inside Siri’s AI search upgrade — aiming for release in spring 2026.

The Details:

Project “World Knowledge Answers” will turn Siri into an answer engine (text, photos, video, local info)

Google’s Gemini would run on Apple’s private servers — cheaper than Anthropic’s £1.2bn annual proposal

Apple also ditched Perplexity acquisition talks to build its own search features

Meanwhile, Apple keeps losing AI researchers to Meta, OpenAI, and Anthropic

Conscious Take:

This feels like a strange moment. Apple, the company known for “we’ll do it ourselves” is leaning on Google to catch up. Good for them though and I do hope we start to see some great innovation in this space from Apple.

Lord, in a world sprinting ahead, anchor us in Your wisdom. Help us lead not from fear, but from peace.

That's all for now

Stay conscious,

Josh

P.S. If you liked this then please forward it on to someone you think would enjoy it. And if someone forwarded you this and you liked it, you can sign up here.